An AI Skeptic's Primer

The photo above is of the Trundholm sun chariot, a Nordic Bronze Age artifact dated to about 1400 BC. It’s likely a representation of Skinfaxi, the horse that pulled Dagr (the personification of day) across the sky (Wikipedia).

People have believed all sorts of things that later turned out to not be true.

I wanted to review my assumptions about AI tools based on sources I’ve collected recently and over the last few years. My core thoughts remain the same:

Current AI tools like ChatGPT and Gemini are “mimicry machines” whose output looks like there is reasoning behind it, but the output is all based on statistics and publicly available sources. This is why there remains hallucinations and fabrication of sources, and why AI tools are so scarily vulnerable to bad actors.

There are definite limits to use cases. It seems to me the current tools available should only mostly targeted for lower-value use cases—definitely not mission-critical use cases. If AI tools are assisting with or are in charge of more high-value cases, then it is imperative that time and resources be spent on setting up precautions, guard rails, and multiple layers of human verification.

AI tools at the moment seem to be best at well-defined tasks and where there are low amounts of novelty. From the perspective of a business, they can achieve good outcomes when they use AI tools to make better use of other embedded tools (like software) and all the data within their business. However, in circumstances where there is novelty or where creativity is required, AI seems to be average or pretty bad.

Does AI Have Game?

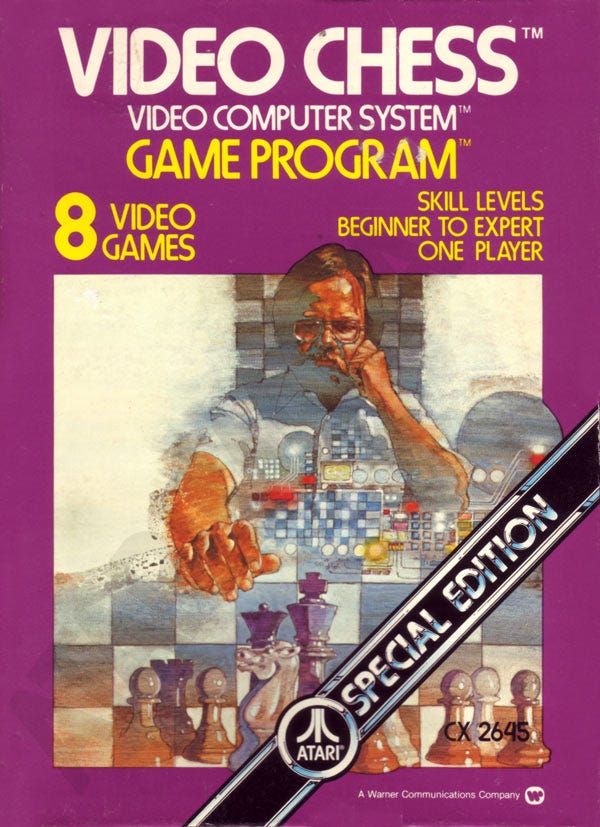

For example, let’s consider how AI performs in three different games. First is chess. It’s a game with simple rules and large archives of play-by-play matches and opening sequences. The data for learning to “get good” at chess are available on the internet, the primary source for LLM learning. An uninformed person might think chess would be a perfect domain for ChatGPT to show off. However, despite ChatGPT having access to the data and despite being powered by extraordinarily expensive GPUs, ChatGPT could not even beat the Atari 2600’s “Video Chess” program running on hardware from 1977.

The second game is NetHack.

NetHack “is an open source single-player roguelike video game, first released in 1987” that used only a simple ASCII text-based user interface. Many gamer historians consider NetHack to be one of the oldest and most difficult computer games in history. Compared to chess, NetHack is much more open-ended, but still bounded by a finite set of rules and operations.

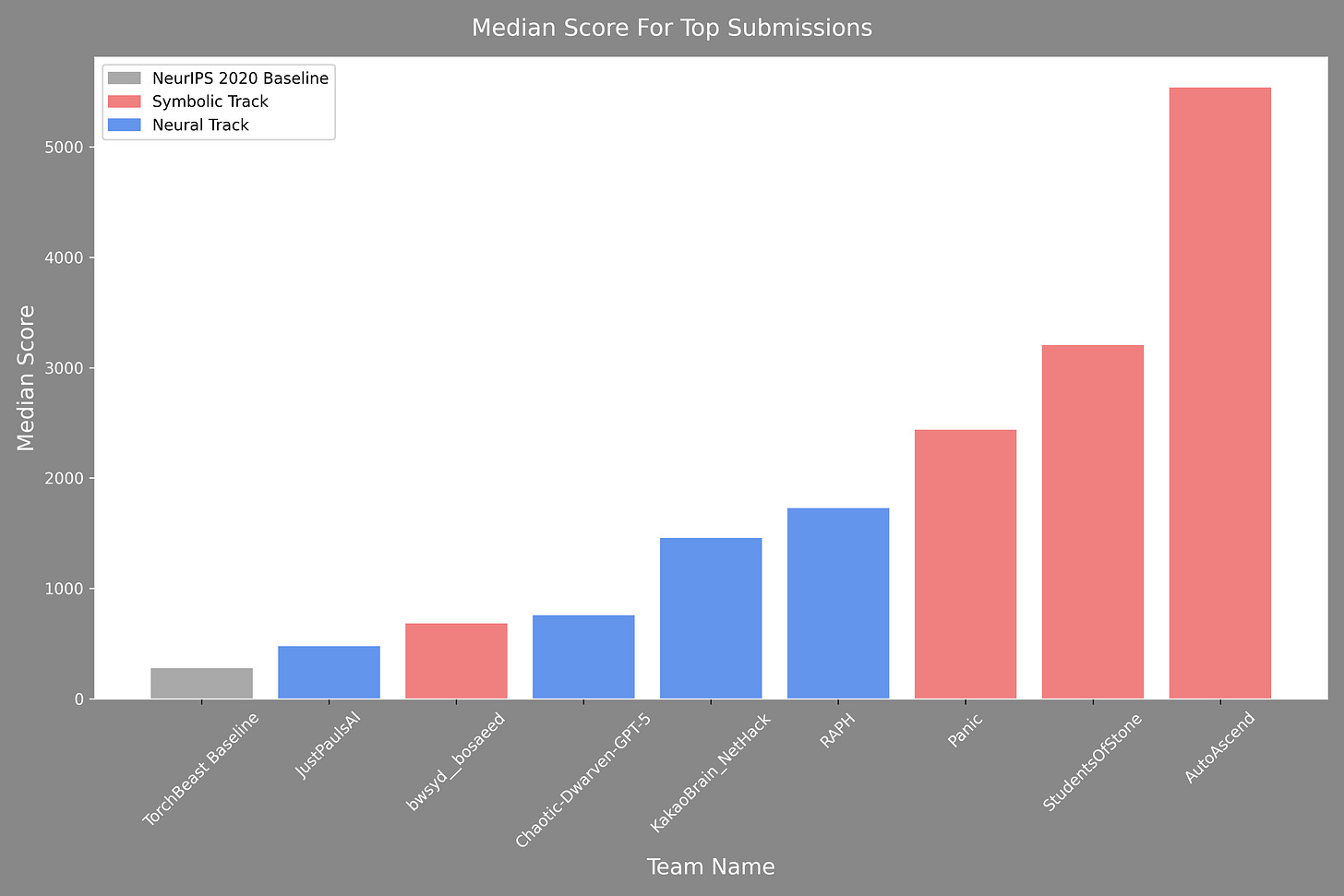

We can assess how AI agents performed in this game during the NetHack challenge of 2021. The goal of the challenge was to have engineering teams build agents—either based on symbolic systems or on deep learning/neural networks—to play NetHack and to see which agent was the best. To explain the difference between the two types of agents: ones based on symbolic systems are those reliant on custom, pre-defined logic while ones based on neural networks created their own internal rules after many iterations. The top performer in this challenge was a symbolic agent and it vastly outperformed the top neural net (i.e., AI) agent.

To be fair, this challenge was four years ago and AI models have improved remarkably since then. However, I would bet a few hundred dollars that if this challenge was held again today, it would still be the specifically designed programs that outperform the AI agents.

The fair critique against these types of AI tools not performing well in chess or NetHack is they were never designed to excel at these games. It’s like using a hammer to dig a trench. Generative AI tools were designed to predict answers using statistics. Thus, they excel in many other areas of use, just not chess or NetHack.

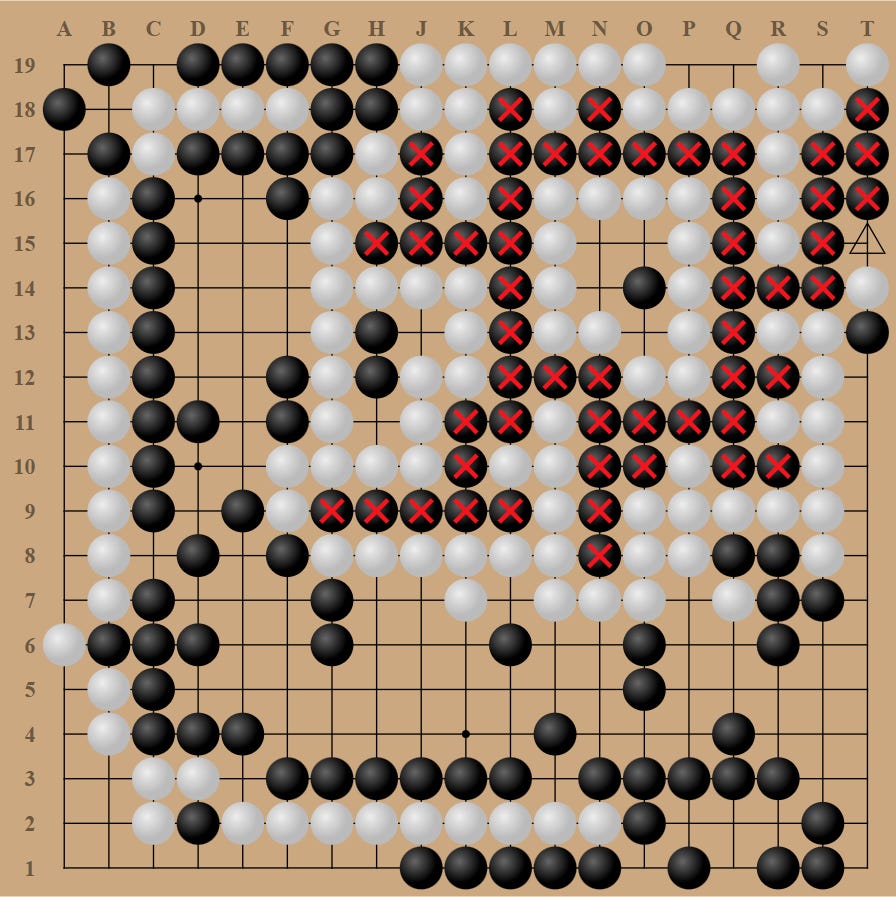

So, let’s move to the third example of Go.

Over a decade ago, AI researchers at DeepMind Technologies created AlphaGo based on neural network training with the goal of defeating a human world champion. In 2016, AlphaGo beat the world champion Lee Sedol in a 4-1 victory (there’s a great documentary about this you can watch on YouTube). This was an amazing achievement because experts at the time thought the mastery of this “impossible” game by AI was at least a decade away. On a human note, Sedol unfortunately quit Go in 2019 because he felt AI was unbeatable.

Thus, you might be surprised to learn that in 2022, an amateur Go player by the name of Kellin Pelrine defeated KataGo, a program even stronger than AlphaGo. A team of researchers at FAR AI developed their own adversarial program to discover weaknesses in KataGo. They then taught the appropriate strategies to Pelrine who then won 14 out of 15 games against KataGo.

Despite the fact it was a different AI system discovering the winning strategies, it was still the human that enacted them. That a human can beat one of the best AI-based programs in the game of Go is the interesting, good news. The bad news is that any AI system, whether based on generative language modeling or based on strategic reinforcement learning, can be exploited. In a post entitled “Even Superhuman Go AIs Have Surprising Failure Modes”, FAR AI wrote this (emphasis mine):

Our results also give some general lessons about AI outside of Go. Many AI systems, from image classifiers to natural language processing systems, are vulnerable to adversarial inputs: seemingly innocuous changes such as adding imperceptible static to an image or a distractor sentence to a paragraph can crater the performance of AI systems while not affecting humans. Some have assumed that these vulnerabilities will go away when AI systems get capable enough—and that superhuman AIs will always be wise to such attacks. We’ve shown that this isn’t necessarily the case: systems can simultaneously surpass top human professionals in the common case while faring worse than a human amateur in certain situations.

This is concerning: if superhuman Go AIs can be hacked in this way, who’s to say that transformative AI systems of the future won’t also have vulnerabilities? This is clearly problematic when AI systems are deployed in high-stakes situations (like running critical infrastructure, or performing automated trades) where bad actors are incentivized to exploit them. More subtly, it also poses significant problems when an AI system is tasked with overseeing another AI system, such as a learned reward model being used to train a reinforcement learning policy, as the lack of robustness may cause the policy to capably pursue the wrong objective (so-called reward hacking).

With AI tools being hyped to an extent that many people believe they can do almost anything, we should pause to think about the implications of AI’s performance in these three games. Can AI be a Holy Grail when it comes to performance and efficiency in all areas of life? One should also question the seeming inevitability that AI will replace traditional software, whether it’s highly-embedded horizontal enterprise software or highly-embedded and industry-specific vertical software.

Wrapping Up My Thoughts

I skew to Baldur Bjarnason’s view that perhaps the most impressive thing about an LLM-based agent is its illusion of intelligence—based on “a variation on the psychic’s con”—where the “illusion is in the mind of the user and not in the LLM itself.”

If that observation is even half-way correct, one must use LLM-based AI tools with caution and full awareness they are just prediction machines, albeit very good ones. A metaphor I’ve come across several times is the output from a LLM should be treated as if it came from an unreliable intern.

I’m also certain AI tools will continue to proliferate. I myself use Google’s Gemini and NotebookLM to jump start research, brain storm, and to organize writing themes and ideas. Yet, I always double-check and verify before I trust anything these tools may have touched or created.

From an investment standpoint, the biggest problem with AI is the extraordinary hype surrounding it over the last three years. Across multiple dimensions for this topic, it has been a challenge to ascertain what is probable versus improbable. Many people think these tools can do almost anything. Some investors claim the total addressable market is in the trillions of dollars. Putting on the skeptic’s cap, this is obviously what all of the big venture capitalists want (or need) you to think. Whether it turns out to be true or not won’t make much of a difference to them. They will make a ton of money either way.

All that said, I remain skeptical and cautious. I also do my best to be genuinely curious and open-minded. Many things about AI are great, but I do not think AI is as great as many people seem to think. Furthermore, I do not think the market for AI will be as big as venture capitalists are currently promoting. Or if it is, it will take longer than advertised to get to the size of revenues, let alone profits, they envision.

Amara’s Law, stated in 1978, is applicable once again:

“We tend to overestimate the effect of a technology in the short run and underestimate the effect in the long run.”

—Roy Charles Amara, President of the Institute for the Future

I hope the collection of links below will help increase your knowledge about AI, especially as it pertains to its shortcomings and risks.

Core Reasons for AI Shortcomings

Generative AI: What You Need To Know

A comprehensive list of the basic facts and shortcomings about generative AI. I recommend reading this in its entirety.

AI Snake Oil: What Artificial Intelligence Can Do, What It Can’t, and How to Tell the Difference by Arvind Narayanan and Sayash Kapoor

“Gary Marcus on the Massive Problems Facing AI & LLM Scaling” - The Real Eisman Playbook Episode 42

A great and basic overview of AI and LLMs, the best and worst use cases for AI, and why Gary Marcus thinks that deep learning is hitting the limit for further improvements.

“Generative AI’s crippling and widespread failure to induce robust models of the world” - Gary Marcus

“The Reverse-Centaur’s Guide to Criticizing AI” - Cory Doctorow

Is there an AI bubble?

“AI faces closing time at the cash buffet”, The Register, Dec 24, 2025.

“The Hater’s Guide To NVIDIA”, Ed Zitron, Nov. 24, 2025.

“The AI that we’ll have after AI”, Cory Doctorow, Oct. 16, 2025.

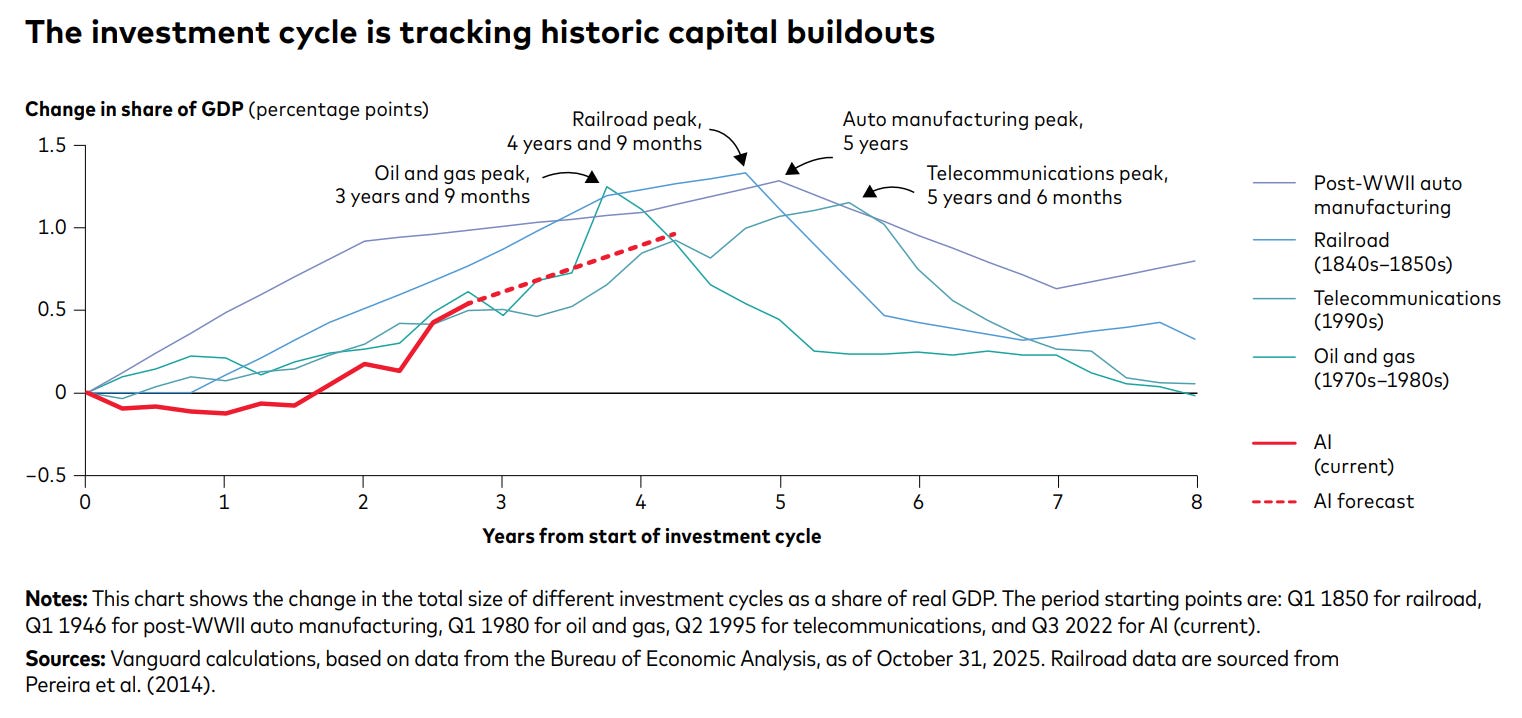

“AI exuberance: Economic upside, stock market downside”, Vanguard, December 2025.

Based on prior capital buildout cycles, Vanguard believes this AI cycle is still in the early years and might peak in the next two to three years.

“State of the Markets”, Andreesen Horowitz, January 22, 2026.

A really slick slide deck that paints a grand picture. A portion of it addresses whether or not AI is in a bubble. This VC firm is incredibly bullish on AI given they believe it can capture a portion of the much larger market of U.S. white collar payroll ($6 trillion) compared to enterprise software spend ($300-$350 billion).

They do admit that debt has just arrived to help finance the massive AI spending, but everything is still totally fine and reasonable.

Demand for GPUs and TPUs still far exceeds supply. Amin Vahdat, VP and GM of AI and Infrastructure at Google, says Google has 8-year-old TPUs that still have 100% utilization. (My follow-up question would have been “What % of TPUs of that generation actually survived that long?”).

One slide states that in the early 2000s, only 7% of the recently-laid fiber optic cable was lit. Compare that to the nearly full utilization of GPUs in AI data centers today. I assume this is to reassure everyone that AI is currently not in a bubble.

The Risks of AI

The risks associated with AI is a category which I believe is totally underappreciated. The proliferation of AI tools come with serious risks to security, privacy, physical health, and to any democracy that has a desire to function well.

“LLMs + Coding Agents = Security Nightmare”, Gary Marcus and Nathan Hamiel, Aug. 17, 2025.

This is an article that really scared me and something everyone should read. AI coding tools in particular are yet another vector for hackers to gain Remote Code Execution. “What terrified Gary was that the NVIDIA researchers showed that the number of ways to do this—engendering all sorts of negative consequences, including RCEs—was basically infinite.”

“Epic’s overhaul of a flawed algorithm shows why AI oversight is a life-or-death issue”, STAT, July 11, 2025.

“AI-generated code could be a disaster for the software supply chain”, Ars Technica, April 29, 2025.

“Bad Actors are Grooming LLMs to Produce Falsehoods”, The American Sunlight Project, July 11, 2025.

Will AI Replace Software?

“Where’s the Shovelware? Why AI Coding Claims Don’t Add Up”, Mike Judge, Sep. 3, 2025.

“The AI Shovelware Question: One Month Later”, Mike Judge, Sep. 28, 2025.

I think Judge’s comment that AI tools have the psychological effect of intermittent reinforcement (like gambling) is spot-on: “Occasionally it nails the prompt first try, which is genuinely impressive, mixed with many misses. You remember the jackpots and forget the hours of pumping in tokens.”

Like Judge, I am highly suspect of reports of productivity improvements. Are the reports based on subjective survey responses or are they actually based on long-term data that encompasses all the work, re-work, and debugging that may have resulted from the use of AI coding tools? And if it’s a customer facing AI tool, are these companies measuring customer satisfaction or counting the number of customers retained or lost after getting rid of the human service reps? Or is it simply the short-term savings that happen from the difference between what a human costs and what the AI tool costs?

“Aaron Levie: Why Startups Win In The AI Era”, Y Combinator, Sep. 16. 2025.

Aaron Levie, CEO and co-founder of Box, fields questions about AI and software. When asked about whether companies will use AI to code cheaper versions of software they’ve historically used, Levie cites Geoff Moore. He says every company has to decide what is core and what is context within their business. If it’s Disney, content creation is core to the business while HR software is just context.

And assuming if Disney (or anyone else) did create their own HR software, Levie says (my emphasis): “But, you know, here’s the problem. Three years from now, there’s going to be a bug. That bug is going to … pay people the wrong amount of money. I don’t want to have to go and call my IT team in the middle of the night to be like, ‘Shit, you have to go fix this bug that paid everybody the wrong amount of money.’ I want to be able to go to a company that I know … I can sue if they fuck up, or switch to a competitor … because I can’t sue my internal IT team and I certainly can’t sue Anthropic.”

“Cisco AI Summit | Special live event with Jensen Huang”, Cisco, Feb. 3, 2026.

Jensen Huang, CEO of NVIDIA, says “it is the most illogical thing in the world” that software will be replaced by AI. He argues software is a tool, and that even in the ultimate world where artificial general intelligence is a reality, AI will of course use the tools that already exist and work. They won’t be reinventing what already works. Jensen’s rhetorical question was, would you expect the AI to use a hammer or would you expect it to invent a new hammer? Would you use proven tools from SAP or Cadence or Synopsis, or would you reinvent them?

“Building Insight Partners”, Colossus, Sep. 16, 2025.

Colossus interviews Jeff Horing, a co-founder of Insight Partners. Jeff is “not losing any sleep” over whether SAP or other software businesses with mission-critical products will be displaced by new, AI-coded software. On the other hand, businesses could certainly allocate more resources to AI tools and agents that would have gone to the incumbents. If a reallocation of budgets turns into reality, that affects the growth rates of the traditional software companies, which in turn affects their valuations.

“Modern software quality, or why I think using language models for programming is a bad idea”, Baldur Bjarnason, May 30, 2023.

Workslop and “Enshittification”

“Workslop is the new busywork. And it’s costing millions.” - BetterUp

“Research from BetterUp Labs and Stanford Social Media Lab uncovers how AI-generated ‘slop’ masquerades as productivity.” This survey showed 40% of respondents felt they had received workslop, which on average took 2 hours to fix at an average monthly cost of $186 per employee. These data are based on an online survey of 1,150 full-time U.S. desk workers conducted in September 2025 by BetterUp in partnership with the Stanford Social Media Lab.

This quote from an anonymous project manager is a goodie: “Receiving this poor quality work created a huge time waste and inconvenience for me. Since it was provided by my supervisor, I felt uncomfortable confronting her about its poor quality and requesting she redo it. So instead, I had to take on effort to do something that should have been her responsibility, which got in the way of my other ongoing projects.”

“Why People Create AI ‘Workslop’—and How to Stop It”, HBR, January 16, 2026.

“The Unsettling Rise of AI Real-Estate Slop”, The Atlantic, February 4, 2026.

Over-Reliance on AI Might Stunt Professional Development

“How AI assistance impacts the formation of coding skills”, Anthropic, Jan. 26, 2026.

Anthropic conducted a randomized controlled trial with 52 software developers as participants to test whether there were potential downsides to using AI assistance. The results: “On average, participants in the AI group finished about two minutes faster, although the difference was not statistically significant. There was, however, a significant difference in test scores: the AI group averaged 50% on the quiz, compared to 67% in the hand-coding group—or the equivalent of nearly two letter grades.”

“AWS CEO Says Replacing Junior Developers with AI Is the Dumbest Thing He’s Ever Heard”, Final Round AI, Aug. 25, 2025.

Using AI tools to make senior devs more productive to then save money by not hiring as many junior devs is a short-term but a long-term detriment. Professional development, learning by actually doing, and coaching and mentorship from another human is the pathway for any enterprise to remain viable for the long-term.

Productivity

This category overlaps with workslop, but has more specific examples.

“AI, layoffs, productivity and The Klarna Effect”, Gary Marcus, Aug. 23, 2025.

Klarna fired customer service employees and replaced them with chat bots. It quickly reversed course.

“A tale of three customer service chatbots”, Cory Doctorow, Nov. 12, 2025.

Doctorow’s experience with three chatbots. Two were awful, but one was positive because he easily tricked it into revealing information it should not have.

“AI agents get office tasks wrong around 70% of the time, and a lot of them aren’t AI at all”, The Register, June 29, 2025.

One key finding was 95% of organizations reported no measurable ROI from AI investments despite widespread adoption.

Success Stories?

“Claude Code saved us 97% of the work — then failed utterly”, Thoughtworks, March 10, 2025.

“How Norwegian giant NBIM spots portfolio managers’ biases using AI”, Investment Magazine, June 26, 2025.

“The 47-Hour Marathon That Almost Made Me Quit Claude Code — Until Everything Changed on September 17th”, Reza Rezvani, Sep. 18, 2025.

A story of how one person and his team went all-in on Claude Code. Then for unknown reasons Claude became their worst enemy due to changes by Anthropic unknown to them. This team was suddenly spending more time on managing Claude Code’s failures than doing actual coding. But then Anthropic made some adjustments and things started working again. This group is apparently still all-in on Claude Code.

“JPMorgan Chase’s Gen AI implementation: 450 use cases and lessons learned”, Tearsheet, May 27, 2025.

“Salesforce CEO confirms 4,000 layoffs ‘because I need less heads’ with AI”, CNBC, Sep. 2, 2025.

“Some companies tie AI to layoffs, but the reality is more complicated”, AP, February 2, 2026.

Please Subscribe and Share

Please share if you have found this post interesting and illuminating. Make sure to subscribe so all future posts find their way to your inbox.

Disclaimers for this Substack

The content of this publication is for entertainment and educational purposes only and should not be considered a recommendation to buy or sell any particular security. The opinions expressed herein are those of Douglas Ott in his personal capacity and are subject to change without notice. Consider the investment objectives, risks, and expenses before investing.

Investment strategies managed by Andvari Associates LLC, Doug’s employer, may have a position in the securities or assets discussed in any of its writings. Doug himself may have a position in the securities or assets discussed in any of his writings. Securities mentioned may not be representative of Andvari’s or Doug’s current or future investments. Andvari or Doug may re-evaluate their holdings in any mentioned securities and may buy, sell or cover certain positions without notice.

Data sources for all charts come from SEC filings, Koyfin, and other publicly available information.

Thanks for the links compilation. Don't agree with everything you wrote, but I can see the rationale behind the claims. Happy I found you!